IOS and macOS do, however, let you add your own custom Voice Control commands, which are akin to the old Speakable items. But it doesn’t know about OmniFocus-specific terms such as actions, projects, or deferment dates. You can tell it to tap a button by name or by number and dictate text into fields. The catch is that, out of the box, Voice Control only has a system-level vocabulary. Once enabled, you can toggle it by saying “Wake up” or “Go to sleep.” You can turn on Voice Control either in Settings or by asking Siri. Voice Control itself is also a mode, which is great because you don’t have to prefix every command with “Hey Siri”. You can also freely mix it with dictation so that you can navigate within an app and enter text into fields without switching between separate listening modes, though there are separate Dictation and Command modes if you prefer not to rely on this. Voice Control ( iOS, Mac) happens on device, and my experience is that it’s faster and more accurate than Siri, since it’s working with a much more restricted domain of commands and doesn’t need to talk to a server.

Voice Control lets users control the entire device with spoken commands and specialized tools, while Siri is an intelligent assistant that lets users ask for information and complete everyday tasks using natural language. It’s important to note the differences between VoiceOver, Voice Control, and Siri: It can also work hands-free, when my fingers are otherwise occupied or in gloves. In theory, voice can offer quick random access to commands without having to first locate them with my eyes and then fingers. So, my hope is that I can use these new Voice Control features as sort of the equivalent to keyboard shortcuts. I find myself using Siri to make new actions to remind myself to adjust other actions-because that’s easier than making the changes directly right then. That’s not ideal because a major benefit of OmniFocus is that it lets me get stuff out of my head having to remember which changes to apply later works against that.

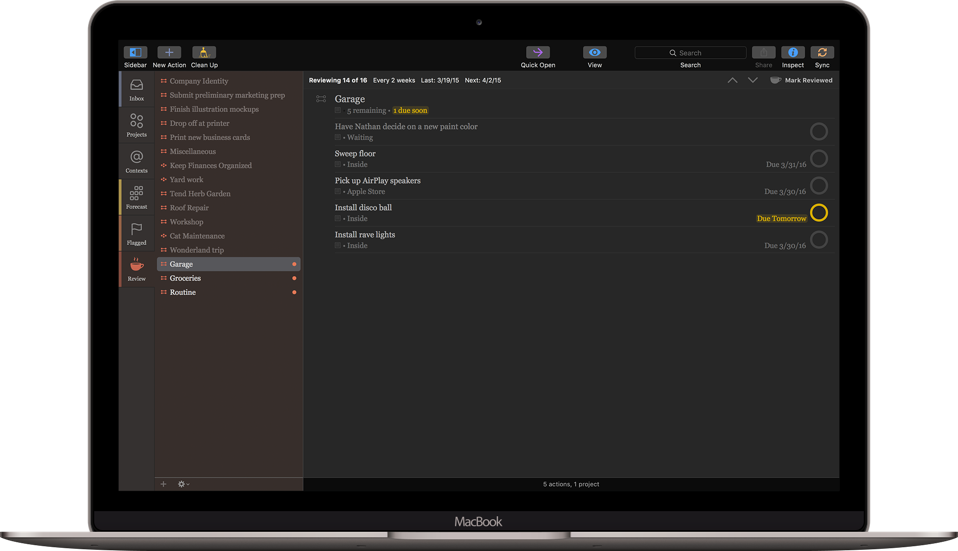

#OMNIFOCUS 3 MAC#

But I postpone as much of the other stuff as possible until I’m back to my Mac because I know it will be so much easier there.

I love using my iPhone- and now my Apple Watch-to create new OmniFocus actions, and I’ve long done this by using Siri to add reminders. For someone with motor limitations, Voice Control is transformative but one doesn’t need to have motor limitations to have it enhance the experience of using OmniFocus.Īs someone without such limitations, I’m most interested in the potential for voice interactions on my iPhone, where the lack of a physical keyboard makes many tasks feel slow and plodding.

#OMNIFOCUS 3 FULL#

Voice Control offers an enhanced command and dictation experience, giving full access to every major function of the operating system. If you’re new to Apple’s Voice Control feature, it empowers control of a Mac, iPhone and iPad entirely with one’s voice. With these automation enhancements, OmniFocus 3.13 can now take full advantage of the new Voice Control features offered the latest iOS, iPadOS and macOS releases, delivering an incredible level of voice-driven productivity. OmniFocus 3.13 provides a wide range of improvements to Omni Automation-perhaps most notably adding support for Speech Synthesis, but also a number of other improvements as well.